前文列表

- 《云计算发展编年史 1725-2023(第二版)》

- 《虚拟化技术 — 硬件辅助的虚拟化技术》

- 《虚拟化技术 — QEMU-KVM 基于内核的虚拟机》

- 《虚拟化技术 — VirtIO 虚拟设备接口标准》

- 《虚拟化技术 — Linux Kernel 虚拟化网络设备》

- 《虚拟化技术 — 应用 Bridge 和 VLAN 子接口构建 KVM 多平面网络》

- 《虚拟化技术 — VirtIO Networking 虚拟网络设备》

- 《虚拟化技术 — Libvirt 异构虚拟化管理组件》

目录

- OpenStack 架构

- Conceptual architecture

- Logical architecture

- 网络选型

- Networking Option 1: Provider networks

- Networking Option 2: Self-service networks

- 双节点部署网络拓扑

- 基础服务

- DNS 域名解析

- NTP 时间同步

- YUM 仓库源

- MySQL 数据库

- RabbitMQ 消息队列

- Memcached

- Etcd

- OpenStack Projects

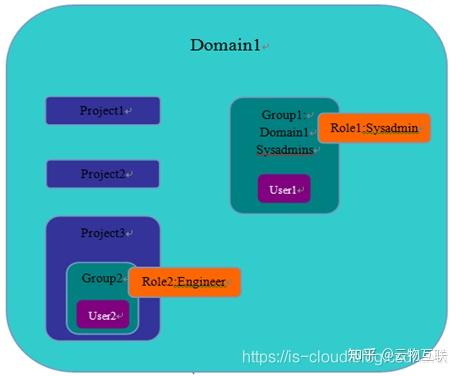

- Keystone(Controller)

- Glance(Controller)

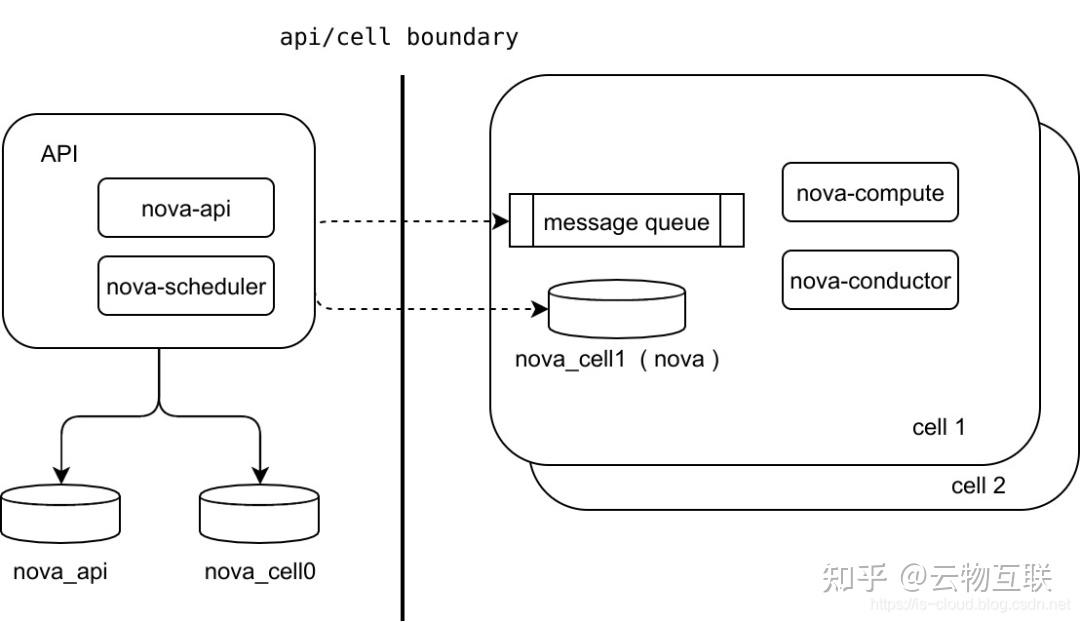

- Nova(Controller)

- Nova(Compute)

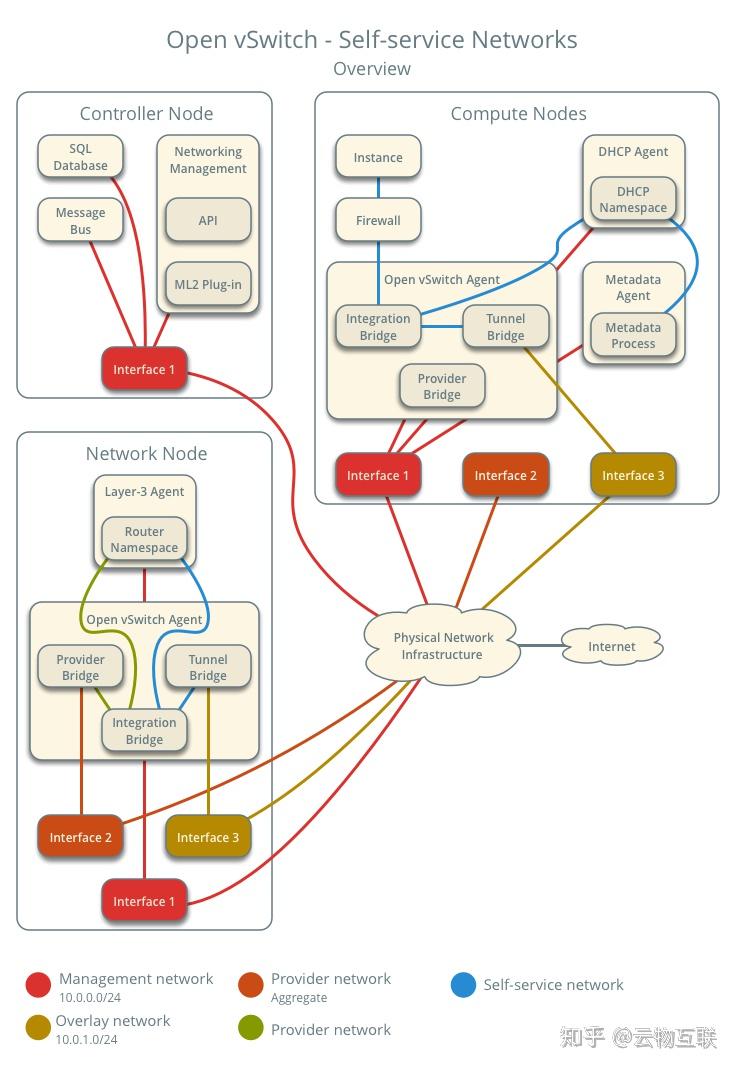

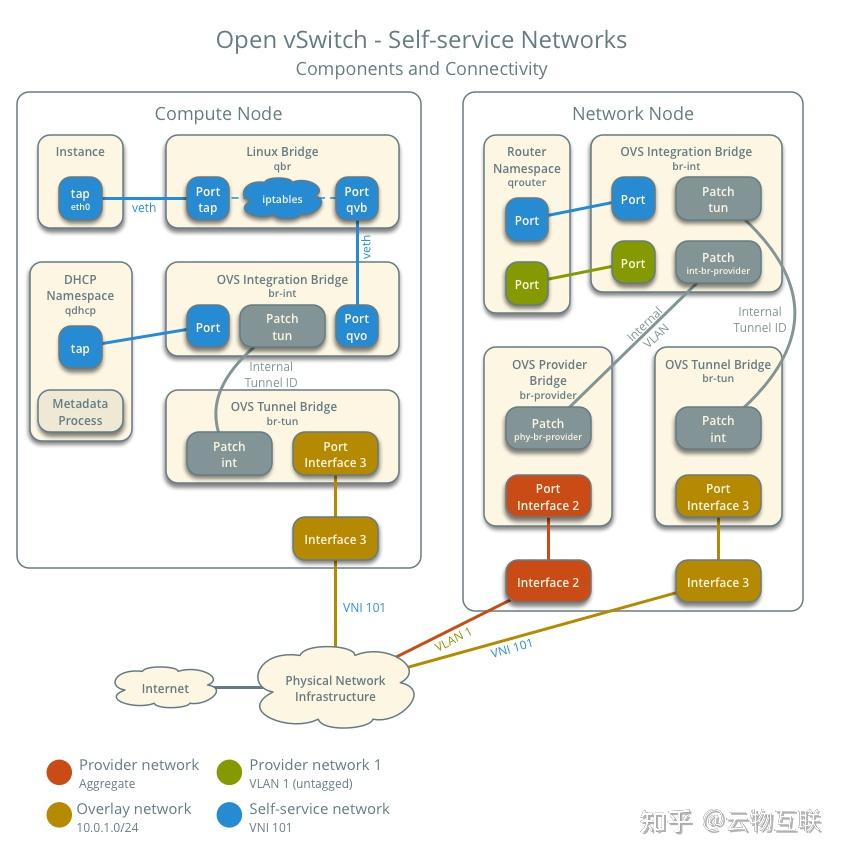

- Neutron Open vSwitch mechanism driver(Controller)

- Neutron Open vSwitch mechanism driver(Compute)

- Horizon(Controller)

- Cinder(Controller)

前言

OpenStake 自动化部署工具一向被视为 OpenStack 发展的重点,现已被市场认可的有 Kolla、TripleO 等优秀工具。但本文主要记录全手动部署方式,以此来更深入理解 OpenStack 的软件架构。

- 出于稳定性的考虑选择了较早起的 Rocky 版本;

- 出于易管理的考虑选择了双节点部署架构。

OpenStack 架构

- 官方文档:https://docs.openstack.org/install-guide/

Conceptual architecture

Logical architecture

网络选型

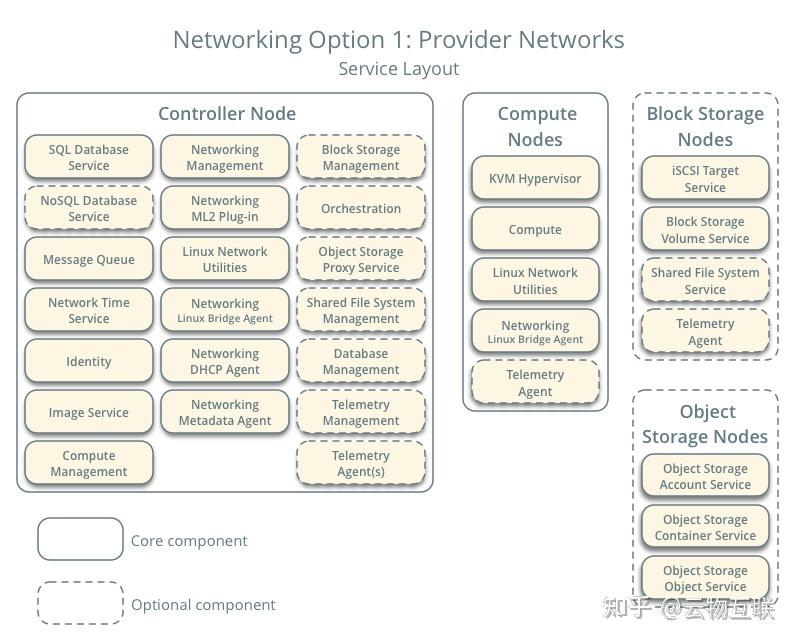

Networking Option 1: Provider networks

Provider networks 是将节点的虚拟网络桥接到运营商的物理网络(e.g. L2/L3 交换机、路由器),是一种比较简单的网络模型,物理网络设备的加入也使得网络具有更高的性能。但由于 Neutron 无需启用 L3 Router 服务,所以也就不能支持 LBaaS、FWaaS 等高级功能。

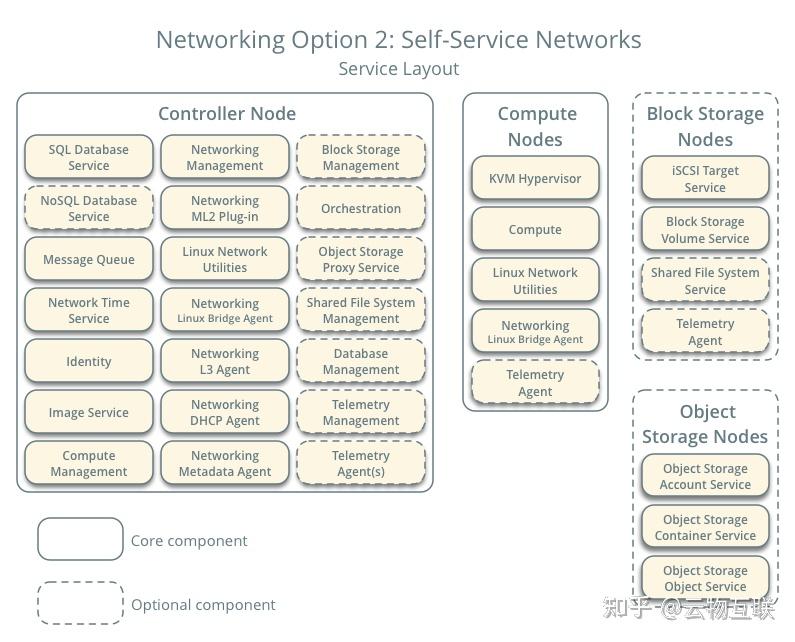

Networking Option 2: Self-service networks

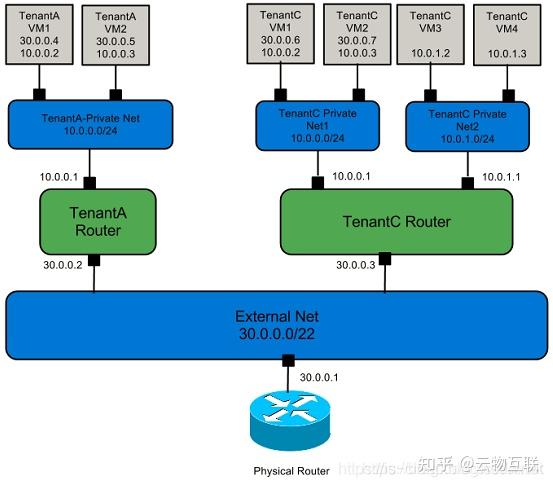

Self-service networks(自服务的网络),是一套完整的 L2、L3 网络虚拟化解决方案,用户可以在完全不了解底层物理网络拓扑的情况下创建虚拟网络,Neutron 为用户提供多租户隔离多平面网络。

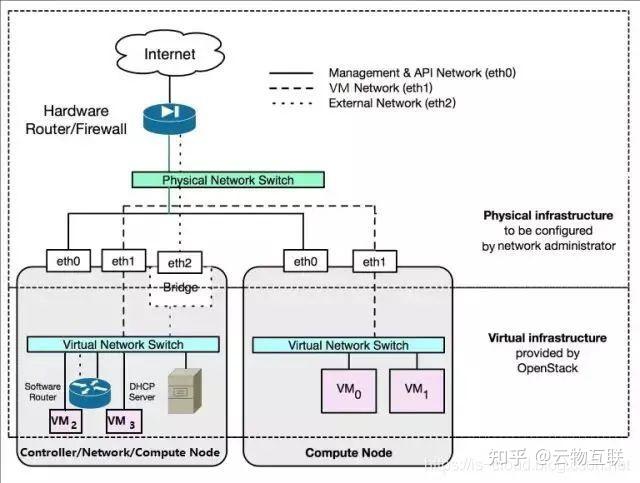

双节点部署网络拓扑

Controller

- ens160: 172.18.22.231/24

- ens192: 10.0.0.1/24

- ens224: br-provider NIC

- sba:系统盘

- sdb:Cinder 存储盘

Compute

- ens160: 172.18.22.232/24

- ens192: 10.0.0.2/24

- sba:系统盘

NOTE:下述 "fanguiju" 均为替换密码。

基础服务

DNS 域名解析

NOTE:我们使用 hosts 文件代替。

- [root@controller ~]# cat /etc/hosts

- 127.0.0.1 controller localhost localhost.localdomain localhost4 localhost4.localdomain4

- ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

- 172.18.22.231 controller

- 172.18.22.232 compute

- [root@compute ~]# cat /etc/hosts

- 127.0.0.1 controller localhost localhost.localdomain localhost4 localhost4.localdomain4

- ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

- 172.18.22.231 controller

- 172.18.22.232 compute

- [root@controller ~]# cat /etc/chrony.conf | grep -v ^# | grep -v ^$

- server 0.centos.pool.ntp.org iburst

- server 1.centos.pool.ntp.org iburst

- server 2.centos.pool.ntp.org iburst

- server 3.centos.pool.ntp.org iburst

- driftfile /var/lib/chrony/drift

- makestep 1.0 3

- rtcsync

- allow 172.18.22.0/24

- logdir /var/log/chrony

- [root@controller ~]# systemctl enable chronyd.service

- [root@controller ~]# systemctl start chronyd.service

- [root@controller ~]# chronyc sources

- 210 Number of sources = 4

- MS Name/IP address Stratum Poll Reach LastRx Last sample

- ===============================================================================

- ^+ ntp1.ams1.nl.leaseweb.net 2 6 77 24 -4781us[-6335us] +/- 178ms

- ^? static.186.49.130.94.cli> 0 8 0 - +0ns[ +0ns] +/- 0ns

- ^? sv1.ggsrv.de 2 7 1 17 -36ms[ -36ms] +/- 130ms

- ^* 124-108-20-1.static.beta> 2 6 77 24 +382us[-1172us] +/- 135ms

- [root@compute ~]# cat /etc/chrony.conf | grep -v ^# | grep -v ^$

- server controller iburst

- driftfile /var/lib/chrony/drift

- makestep 1.0 3

- rtcsync

- logdir /var/log/chrony

- [root@compute ~]# systemctl enable chronyd.service

- [root@compute ~]# systemctl start chronyd.service

- [root@compute ~]# chronyc sources

- 210 Number of sources = 1

- MS Name/IP address Stratum Poll Reach LastRx Last sample

- ===============================================================================

- ^? controller 0 7 0 - +0ns[ +0ns] +/- 0ns

- $ yum install centos-release-openstack-rocky -y

- $ yum upgrade -y

- $ yum install python-openstackclient -y

- $ yum install openstack-selinux -y

- $ yum install mariadb mariadb-server python2-PyMySQL -y

- [root@controller ~]# cat /etc/my.cnf.d/openstack.cnf

- [mysqld]

- bind-address = 172.18.22.231

- default-storage-engine = innodb

- innodb_file_per_table = on

- max_connections = 4096

- collation-server = utf8_general_ci

- character-set-server = utf8

- [root@controller ~]# systemctl enable mariadb.service

- [root@controller ~]# systemctl start mariadb.service

- [root@controller ~]# systemctl status mariadb.service

- # 初始化 MySQL 数据库密码

- [root@controller ~]# mysql_secure_installation

TS:OpenStack 众多服务都会访问 MySQL 数据库,所以要对 MySQL 进行一些参数的设置,例如:增加最大连接数量、减少连接等待时间、自动清楚连接数间隔等等。e.g.- [root@controller ~]# cat /etc/my.cnf | grep -v ^$ | grep -v ^#

- [client-server]

- [mysqld]

- symbolic-links=0

- max_connections=1000

- wait_timeout=5

- # interactive_timeout = 600

- !includedir /etc/my.cnf.d

- $ yum install rabbitmq-server -y

- [root@controller ~]# systemctl enable rabbitmq-server.service

- [root@controller ~]# systemctl start rabbitmq-server.service

- [root@controller ~]# systemctl status rabbitmq-server.service

- # 初始化 RabbitMQ 用户密码及权限

- [root@controller ~]# rabbitmqctl add_user openstack fanguiju

- [root@controller ~]# rabbitmqctl set_permissions openstack ".*" ".*" ".*"

- Error: unable to connect to node rabbit@localhost: nodedown

- DIAGNOSTICS

- ===========

- attempted to contact: [rabbit@localhost]

- rabbit@localhost:

- * connected to epmd (port 4369) on localhost

- * epmd reports node 'rabbit' running on port 25672

- * TCP connection succeeded but Erlang distribution failed

- * Hostname mismatch: node "rabbit@controller" believes its host is different. Please ensure that hostnames resolve the same way locally and on "rabbit@controller"

- current node details:

- - node name: 'rabbitmq-cli-50@controller'

- - home dir: /var/lib/rabbitmq

- - cookie hash: J6O4pu2pK+BQLf1TTaZSwQ==

Memcached

The Identity service authentication mechanism for services uses Memcached to cache tokens. The memcached service typically runs on the controller node. For production deployments, we recommend enabling a combination of firewalling, authentication, and encryption to secure it. - $ yum install memcached python-memcached -y

- [root@controller ~]# cat /etc/sysconfig/memcached

- PORT="11211"

- USER="memcached"

- MAXCONN="1024"

- CACHESIZE="64"

- # OPTIONS="-l 127.0.0.1,::1"

- OPTIONS="-l 127.0.0.1,::1,controller"

- [root@controller ~]# systemctl enable memcached.service

- [root@controller ~]# systemctl start memcached.service

- [root@controller ~]# systemctl status memcached.service

OpenStack services may use Etcd, a distributed reliable key-value store for distributed key locking, storing configuration, keeping track of service live-ness and other scenarios. - $ yum install etcd -y

- [root@controller ~]# cat /etc/etcd/etcd.conf

- #[Member]

- ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

- ETCD_LISTEN_PEER_URLS="http://172.18.22.231:2380"

- ETCD_LISTEN_CLIENT_URLS="http://172.18.22.231:2379"

- ETCD_NAME="controller"

- #[Clustering]

- ETCD_INITIAL_ADVERTISE_PEER_URLS="http://172.18.22.231:2380"

- ETCD_ADVERTISE_CLIENT_URLS="http://172.18.22.231:2379"

- ETCD_INITIAL_CLUSTER="controller=http://172.18.22.231:2380"

- ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-01"

- ETCD_INITIAL_CLUSTER_STATE="new"

- [root@controller ~]# systemctl enable etcd

- [root@controller ~]# systemctl start etcd

- [root@controller ~]# systemctl status etcd

Keystone(Controller)

- $ yum install openstack-keystone httpd mod_wsgi -y

- # /etc/keystone/keystone.conf

- [database]

- connection = mysql+pymysql://keystone:fanguiju@controller/keystone

- [token]

- provider = fernet

- $ CREATE DATABASE keystone;

- $ GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY 'fanguiju';

- $ GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'fanguiju';

- $ su -s /bin/sh -c "keystone-manage db_sync" keystone

- $ keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

- $ keystone-manage credential_setup --keystone-user keystone --keystone-group keystone

- Bootstrap Keystone Services,自动创建 default domain、admin project、admin user (password)、admin role、member role、reader role 以及 keystone service 和 identity endpoint。

- $ keystone-manage bootstrap --bootstrap-password fanguiju \

- --bootstrap-admin-url http://controller:5000/v3/ \

- --bootstrap-internal-url http://controller:5000/v3/ \

- --bootstrap-public-url http://controller:5000/v3/ \

- --bootstrap-region-id RegionOne

- 配置及启动 Apache HTTP server:Keystone 的 Web Server 依托于 Apache HTTP server,是 httpd 的虚拟主机。

- $ ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/

- # /usr/share/keystone/wsgi-keystone.conf

- # keystone 虚拟主机机配置文件

- Listen 5000

- <VirtualHost *:5000>

- WSGIDaemonProcess keystone-public processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}

- WSGIProcessGroup keystone-public

- WSGIScriptAlias / /usr/bin/keystone-wsgi-public

- WSGIApplicationGroup %{GLOBAL}

- WSGIPassAuthorization On

- LimitRequestBody 114688

- <IfVersion >= 2.4>

- ErrorLogFormat &#34;%{cu}t %M&#34;

- </IfVersion>

- ErrorLog /var/log/httpd/keystone.log

- CustomLog /var/log/httpd/keystone_access.log combined

- <Directory /usr/bin>

- <IfVersion >= 2.4>

- Require all granted

- </IfVersion>

- <IfVersion < 2.4>

- Order allow,deny

- Allow from all

- </IfVersion>

- </Directory>

- </VirtualHost>

- Alias /identity /usr/bin/keystone-wsgi-public

- <Location /identity>

- SetHandler wsgi-script

- Options +ExecCGI

- WSGIProcessGroup keystone-public

- WSGIApplicationGroup %{GLOBAL}

- WSGIPassAuthorization On

- </Location>

- # /etc/httpd/conf/httpd.conf

- ServerName controller

- $ systemctl enable httpd.service

- $ systemctl start httpd.service

- $ systemctl status httpd.service

注入临时身份鉴权变量:- export OS_USERNAME=admin

- export OS_PASSWORD=fanguiju

- export OS_PROJECT_NAME=admin

- export OS_USER_DOMAIN_NAME=Default

- export OS_PROJECT_DOMAIN_NAME=Default

- export OS_AUTH_URL=http://controller:5000/v3

- export OS_IDENTITY_API_VERSION=3

- $ openstack project create --domain default --description &#34;Service Project&#34; service

- $ openstack project create --domain default --description &#34;Demo Project&#34; myproject

- $ openstack user create --domain default --password-prompt myuser

- $ openstack role create myrole

- $ openstack role add --project myproject --user myuser myrole

- [root@controller ~]# openstack domain list

- +---------+---------+---------+--------------------+

- | ID | Name | Enabled | Description |

- +---------+---------+---------+--------------------+

- | default | Default | True | The default domain |

- +---------+---------+---------+--------------------+

- [root@controller ~]# openstack project list

- +----------------------------------+-----------+

- | ID | Name |

- +----------------------------------+-----------+

- | 64e45ce71e4843f3af4715d165f417b6 | service |

- | a2b55e37121042a1862275a9bc9b0223 | admin |

- | a50bbb6cd831484d934eb03f989b988b | myproject |

- +----------------------------------+-----------+

- [root@controller ~]# openstack group list

- [root@controller ~]# openstack user list

- +----------------------------------+--------+

- | ID | Name |

- +----------------------------------+--------+

- | 2cd4bbe862e54afe9292107928338f3f | myuser |

- | 92602c24daa24f019f05ecb95f1ce68e | admin |

- +----------------------------------+--------+

- [root@controller ~]# openstack role list

- +----------------------------------+--------+

- | ID | Name |

- +----------------------------------+--------+

- | 3bc0396aae414b5d96488d974a301405 | reader |

- | 811f5caa2ac747a5b61fe91ab93f2f2f | myrole |

- | 9366e60815bc4f1d80b1e57d51f7c228 | admin |

- | d9e0d3e5d1954feeb81e353117c15340 | member |

- +----------------------------------+--------+

- [root@controller ~]# unset OS_AUTH_URL OS_PASSWORD

- [root@controller ~]# openstack --os-auth-url http://controller:5000/v3 \

- > --os-project-domain-name Default --os-user-domain-name Default \

- > --os-project-name admin --os-username admin token issue

- Password:

- +------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

- | Field | Value |

- +------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

- | expires | 2019-03-29T12:36:47+0000 |

- | id | gAAAAABcngNPjXntVhVAmLbek0MH7ZSzeYGC4cfipy4E3aiy_dRjEyJiPehNH2dkDVI94vHHHdni1h27BJvLp6gqIqglGVDHallPn3PqgZt3-JMq_dyxx2euQL1bhSNX9rAUbBvzL9_0LBPKw2glQmmRli9Qhu8QUz5tRkbxAb6iP7R2o-mU30Y |

- | project_id | a2b55e37121042a1862275a9bc9b0223 |

- | user_id | 92602c24daa24f019f05ecb95f1ce68e |

- +------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

- 创建 OpenStack client environment scripts

- [root@controller ~]# cat adminrc

- export OS_PROJECT_DOMAIN_NAME=Default

- export OS_USER_DOMAIN_NAME=Default

- export OS_PROJECT_NAME=admin

- export OS_USERNAME=admin

- export OS_PASSWORD=fanguiju

- export OS_AUTH_URL=http://controller:5000/v3

- export OS_IDENTITY_API_VERSION=3

- export OS_IMAGE_API_VERSION=2

- [root@controller ~]# source adminrc

- [root@controller ~]# openstack catalog list

- +----------+----------+----------------------------------------+

- | Name | Type | Endpoints |

- +----------+----------+----------------------------------------+

- | keystone | identity | RegionOne |

- | | | admin: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | public: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | internal: http://controller:5000/v3/ |

- | | | |

- +----------+----------+----------------------------------------+

- $ openstack service create --name glance --description &#34;OpenStack Image&#34; image

- $ openstack user create --domain default --password-prompt glance

- $ openstack role add --project service --user glance admin

- $ openstack endpoint create --region RegionOne image public http://controller:9292

- $ openstack endpoint create --region RegionOne image internal http://controller:9292

- $ openstack endpoint create --region RegionOne image admin http://controller:9292

- [root@controller ~]# openstack catalog list

- +-----------+-----------+-----------------------------------------+

- | Name | Type | Endpoints |

- +-----------+-----------+-----------------------------------------+

- | glance | image | RegionOne |

- | | | admin: http://controller:9292 |

- | | | RegionOne |

- | | | public: http://controller:9292 |

- | | | RegionOne |

- | | | internal: http://controller:9292 |

- | | | |

- | keystone | identity | RegionOne |

- | | | admin: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | public: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | internal: http://controller:5000/v3/ |

- | | | |

- +-----------+-----------+-----------------------------------------+

- $ yum install openstack-glance -y

- # /etc/glance/glance-api.conf

- [glance_store]

- stores = file,http

- default_store = file

- # 本地的镜像文件存放目录

- filesystem_store_datadir = /var/lib/glance/images/

- [database]

- connection = mysql+pymysql://glance:fanguiju@controller/glance

- [keystone_authtoken]

- auth_uri = http://controller:5000

- auth_url = http://controller:5000

- memcached_servers = controller:11211

- auth_type = password

- project_domain_name = Default

- user_domain_name = Default

- project_name = service

- username = glance

- password = fanguiju

- [paste_deploy]

- flavor = keystone

- # /etc/glance/glance-registry.conf

- [database]

- connection = mysql+pymysql://glance:fanguiju@controller/glance

- [keystone_authtoken]

- auth_uri = http://controller:5000

- auth_url = http://controller:5000

- memcached_servers = controller:11211

- auth_type = password

- project_domain_name = Default

- user_domain_name = Default

- project_name = service

- username = glance

- password = fanguiju

- [paste_deploy]

- flavor = keystone

- $ CREATE DATABASE glance;

- $ GRANT ALL PRIVILEGES ON glance.* TO &#39;glance&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON glance.* TO &#39;glance&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ su -s /bin/sh -c &#34;glance-manage db_sync&#34; glance

- $ systemctl enable openstack-glance-api.service openstack-glance-registry.service

- $ systemctl start openstack-glance-api.service openstack-glance-registry.service

- $ systemctl status openstack-glance-api.service openstack-glance-registry.service

- $ wget http://download.cirros-cloud.net/0.4.0/cirros-0.4.0-x86_64-disk.img

- $ openstack image create &#34;cirros&#34; \

- --file cirros-0.4.0-x86_64-disk.img \

- --disk-format qcow2 --container-format bare \

- --public

- [root@controller ~]# openstack image list

- +--------------------------------------+--------+--------+

- | ID | Name | Status |

- +--------------------------------------+--------+--------+

- | 59355e1b-2342-497b-9863-5c8b9969adf5 | cirros | active |

- +--------------------------------------+--------+--------+

- [root@controller ~]# ll /var/lib/glance/images/

- total 12980

- -rw-r-----. 1 glance glance 13287936 Mar 29 10:33 59355e1b-2342-497b-9863-5c8b9969adf5

- $ openstack service create --name nova --description &#34;OpenStack Compute&#34; compute

- $ openstack user create --domain default --password-prompt nova

- $ openstack role add --project service --user nova admin

- $ openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1

- $ openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1

- $ openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1

- $ openstack service create --name placement --description &#34;Placement API&#34; placement

- $ openstack user create --domain default --password-prompt placement

- $ openstack role add --project service --user placement admin

- $ openstack endpoint create --region RegionOne placement public http://controller:8778

- $ openstack endpoint create --region RegionOne placement internal http://controller:8778

- $ openstack endpoint create --region RegionOne placement admin http://controller:8778

- [root@controller ~]# openstack catalog list

- +-----------+-----------+-----------------------------------------+

- | Name | Type | Endpoints |

- +-----------+-----------+-----------------------------------------+

- | nova | compute | RegionOne |

- | | | internal: http://controller:8774/v2.1 |

- | | | RegionOne |

- | | | admin: http://controller:8774/v2.1 |

- | | | RegionOne |

- | | | public: http://controller:8774/v2.1 |

- | | | |

- | glance | image | RegionOne |

- | | | admin: http://controller:9292 |

- | | | RegionOne |

- | | | public: http://controller:9292 |

- | | | RegionOne |

- | | | internal: http://controller:9292 |

- | | | |

- | keystone | identity | RegionOne |

- | | | admin: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | public: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | internal: http://controller:5000/v3/ |

- | | | |

- | placement | placement | RegionOne |

- | | | internal: http://controller:8778 |

- | | | RegionOne |

- | | | public: http://controller:8778 |

- | | | RegionOne |

- | | | admin: http://controller:8778 |

- | | | |

- +-----------+-----------+-----------------------------------------+

- $ yum install openstack-nova-api openstack-nova-conductor \

- openstack-nova-console openstack-nova-novncproxy \

- openstack-nova-scheduler openstack-nova-placement-api -y

- # /etc/nova/nova.conf

- [DEFAULT]

- my_ip = 172.18.22.231

- enabled_apis = osapi_compute,metadata

- transport_url = rabbit://openstack:fanguiju@controller

- use_neutron = true

- firewall_driver = nova.virt.firewall.NoopFirewallDriver

- [api_database]

- connection = mysql+pymysql://nova:fanguiju@controller/nova_api

- [database]

- connection = mysql+pymysql://nova:fanguiju@controller/nova

- [placement_database]

- connection = mysql+pymysql://placement:fanguiju@controller/placement

- [api]

- auth_strategy = keystone

- [keystone_authtoken]

- auth_url = http://controller:5000/v3

- memcached_servers = controller:11211

- auth_type = password

- project_domain_name = default

- user_domain_name = default

- project_name = service

- username = nova

- password = fanguiju

- [vnc]

- enabled = true

- server_listen = $my_ip

- server_proxyclient_address = $my_ip

- [glance]

- api_servers = http://controller:9292

- [oslo_concurrency]

- lock_path = /var/lib/nova/tmp

- [placement]

- region_name = RegionOne

- project_domain_name = Default

- project_name = service

- auth_type = password

- user_domain_name = Default

- auth_url = http://controller:5000/v3

- username = placement

- password = fanguiju

- $ CREATE DATABASE nova_api;

- $ CREATE DATABASE nova;

- $ CREATE DATABASE nova_cell0;

- $ CREATE DATABASE placement;

- $ GRANT ALL PRIVILEGES ON nova_api.* TO &#39;nova&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON nova_api.* TO &#39;nova&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON nova.* TO &#39;nova&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON nova.* TO &#39;nova&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON nova_cell0.* TO &#39;nova&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON nova_cell0.* TO &#39;nova&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON placement.* TO &#39;placement&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON placement.* TO &#39;placement&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- 初始化 Nova API 和 Placement 数据库

- $ su -s /bin/sh -c &#34;nova-manage api_db sync&#34; nova

- $ su -s /bin/sh -c &#34;nova-manage db sync&#34; nova

- $ su -s /bin/sh -c &#34;nova-manage cell_v2 map_cell0&#34; nova

- $ su -s /bin/sh -c &#34;nova-manage cell_v2 create_cell --name=cell1 --verbose&#34; nova

- $ su -s /bin/sh -c &#34;nova-manage cell_v2 list_cells&#34; nova

- 注册 Placement Web Server 到 httpd

- # /etc/httpd/conf.d/00-nova-placement-api.conf

- Listen 8778

- <VirtualHost *:8778>

- WSGIProcessGroup nova-placement-api

- WSGIApplicationGroup %{GLOBAL}

- WSGIPassAuthorization On

- WSGIDaemonProcess nova-placement-api processes=3 threads=1 user=nova group=nova

- WSGIScriptAlias / /usr/bin/nova-placement-api

- <IfVersion >= 2.4>

- ErrorLogFormat &#34;%M&#34;

- </IfVersion>

- ErrorLog /var/log/nova/nova-placement-api.log

- #SSLEngine On

- #SSLCertificateFile ...

- #SSLCertificateKeyFile ...

- <Directory /usr/bin>

- <IfVersion >= 2.4>

- Require all granted

- </IfVersion>

- <IfVersion < 2.4>

- Order allow,deny

- Allow from all

- </IfVersion>

- </Directory>

- </VirtualHost>

- Alias /nova-placement-api /usr/bin/nova-placement-api

- <Location /nova-placement-api>

- SetHandler wsgi-script

- Options +ExecCGI

- WSGIProcessGroup nova-placement-api

- WSGIApplicationGroup %{GLOBAL}

- WSGIPassAuthorization On

- </Location>

- $ systemctl restart httpd

- $ systemctl status httpd

- $ systemctl enable openstack-nova-api.service \

- openstack-nova-consoleauth openstack-nova-scheduler.service \

- openstack-nova-conductor.service openstack-nova-novncproxy.service

- $ systemctl start openstack-nova-api.service \

- openstack-nova-consoleauth openstack-nova-scheduler.service \

- openstack-nova-conductor.service openstack-nova-novncproxy.service

- $ systemctl status openstack-nova-api.service \

- openstack-nova-consoleauth openstack-nova-scheduler.service \

- openstack-nova-conductor.service openstack-nova-novncproxy.service

- [root@controller ~]# openstack compute service list

- +----+------------------+------------+----------+---------+-------+----------------------------+

- | ID | Binary | Host | Zone | Status | State | Updated At |

- +----+------------------+------------+----------+---------+-------+----------------------------+

- | 1 | nova-scheduler | controller | internal | enabled | up | 2019-03-29T15:22:51.000000 |

- | 2 | nova-consoleauth | controller | internal | enabled | up | 2019-03-29T15:22:52.000000 |

- | 3 | nova-conductor | controller | internal | enabled | up | 2019-03-29T15:22:51.000000 |

- +----+------------------+------------+----------+---------+-------+----------------------------+

NOTE:在我们的规划中,Controller 同时身兼 Compute,所以在 Controller 上依旧要执行下列部署。NOTE:如果是在虚拟化实验环境,首先要检查虚拟机是否开启了嵌套虚拟化。e.g.- [root@controller ~]# egrep -c &#39;(vmx|svm)&#39; /proc/cpuinfo

- 16

- [root@compute ~]# egrep -c &#39;(vmx|svm)&#39; /proc/cpuinfo

- 16

- $ yum install openstack-nova-compute -y

- # /etc/nova/nova.conf

- [DEFAULT]

- my_ip = 172.18.22.232

- enabled_apis = osapi_compute,metadata

- transport_url = rabbit://openstack:fanguiju@controller

- use_neutron = true

- firewall_driver = nova.virt.firewall.NoopFirewallDriver

- compute_driver = libvirt.LibvirtDriver

- instances_path = /var/lib/nova/instances

- [api_database]

- connection = mysql+pymysql://nova:fanguiju@controller/nova_api

- [database]

- connection = mysql+pymysql://nova:fanguiju@controller/nova

- [placement_database]

- connection = mysql+pymysql://placement:fanguiju@controller/placement

- [api]

- auth_strategy = keystone

- [keystone_authtoken]

- auth_url = http://controller:5000/v3

- memcached_servers = controller:11211

- auth_type = password

- project_domain_name = default

- user_domain_name = default

- project_name = service

- username = nova

- password = fanguiju

- [vnc]

- enabled = true

- server_listen = 0.0.0.0

- server_proxyclient_address = $my_ip

- novncproxy_base_url = http://controller:6080/vnc_auto.html

- [glance]

- api_servers = http://controller:9292

- [oslo_concurrency]

- lock_path = /var/lib/nova/tmp

- [placement]

- region_name = RegionOne

- project_domain_name = Default

- project_name = service

- auth_type = password

- user_domain_name = Default

- auth_url = http://controller:5000/v3

- username = placement

- password = fanguiju

- [libvirt]

- virt_type = qemu

- $ systemctl enable libvirtd.service openstack-nova-compute.service

- $ systemctl start libvirtd.service openstack-nova-compute.service

- $ systemctl status libvirtd.service openstack-nova-compute.service

- $ su -s /bin/sh -c &#34;nova-manage cell_v2 discover_hosts --verbose&#34; nova

- [root@compute ~]# telnet 172.18.22.231 5672

- Trying 172.18.22.231...

- telnet: connect to address 172.18.22.231: No route to host

- firewall-cmd --zone=public --permanent --add-port=4369/tcp &&

- firewall-cmd --zone=public --permanent --add-port=25672/tcp &&

- firewall-cmd --zone=public --permanent --add-port=5671-5672/tcp &&

- firewall-cmd --zone=public --permanent --add-port=15672/tcp &&

- firewall-cmd --zone=public --permanent --add-port=61613-61614/tcp &&

- firewall-cmd --zone=public --permanent --add-port=1883/tcp &&

- firewall-cmd --zone=public --permanent --add-port=8883/tcp

- firewall-cmd --reload

- $ systemctl stop firewalld

- $ systemctl disable firewalld

- Verify在 controller 和 compute 都启动了 nova-compute.service 之后,我们拥有了两个计算节点:

- [root@controller ~]# openstack compute service list

- +----+------------------+------------+----------+---------+-------+----------------------------+

- | ID | Binary | Host | Zone | Status | State | Updated At |

- +----+------------------+------------+----------+---------+-------+----------------------------+

- | 1 | nova-scheduler | controller | internal | enabled | up | 2019-03-29T16:15:42.000000 |

- | 2 | nova-consoleauth | controller | internal | enabled | up | 2019-03-29T16:15:44.000000 |

- | 3 | nova-conductor | controller | internal | enabled | up | 2019-03-29T16:15:42.000000 |

- | 6 | nova-compute | controller | nova | enabled | up | 2019-03-29T16:15:41.000000 |

- | 7 | nova-compute | compute | nova | enabled | up | 2019-03-29T16:15:47.000000 |

- +----+------------------+------------+----------+---------+-------+----------------------------+

- # Check the cells and placement API are working successfully:

- [root@controller ~]# nova-status upgrade check

- +--------------------------------+

- | Upgrade Check Results |

- +--------------------------------+

- | Check: Cells v2 |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- | Check: Placement API |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- | Check: Resource Providers |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- | Check: Ironic Flavor Migration |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- | Check: API Service Version |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- | Check: Request Spec Migration |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- | Check: Console Auths |

- | Result: Success |

- | Details: None |

- +--------------------------------+

- $ openstack service create --name neutron --description &#34;OpenStack Networking&#34; network

- $ openstack user create --domain default --password-prompt neutron

- $ openstack role add --project service --user neutron admin

- $ openstack endpoint create --region RegionOne network public http://controller:9696

- $ openstack endpoint create --region RegionOne network internal http://controller:9696

- $ openstack endpoint create --region RegionOne network admin http://controller:9696

- $ yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-openvswitch -y

- $ systemctl enable openvswitch

- $ systemctl start openvswitch

- $ systemctl status openvswitch

- $ ovs-vsctl add-br br-provider

- $ ovs-vsctl add-port br-provider ens224

- [root@controller ~]# ovs-vsctl show

- 8ef8d299-fc4c-407a-a937-5a1058ea3355

- Bridge br-provider

- Port &#34;ens224&#34;

- Interface &#34;ens224&#34;

- Port br-provider

- Interface br-provider

- type: internal

- ovs_version: &#34;2.10.1&#34;

- $ CREATE DATABASE neutron;

- $ GRANT ALL PRIVILEGES ON neutron.* TO &#39;neutron&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON neutron.* TO &#39;neutron&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ su -s /bin/sh -c &#34;neutron-db-manage --config-file /etc/neutron/neutron.conf \

- --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head&#34; neutron

- $ systemctl restart openstack-nova-api.service

- $ systemctl enable neutron-server.service \

- neutron-openvswitch-agent.service neutron-dhcp-agent.service \

- neutron-metadata-agent.service

- $ systemctl start neutron-server.service \

- neutron-openvswitch-agent.service neutron-dhcp-agent.service \

- neutron-metadata-agent.service

- $ systemctl status neutron-server.service \

- neutron-openvswitch-agent.service neutron-dhcp-agent.service \

- neutron-metadata-agent.service

- $ systemctl enable neutron-l3-agent.service

- $ systemctl start neutron-l3-agent.service

- $ systemctl status neutron-l3-agent.service

- [root@controller ~]# ovs-vsctl show

- 8ef8d299-fc4c-407a-a937-5a1058ea3355

- Manager &#34;ptcp:6640:127.0.0.1&#34;

- is_connected: true

- Bridge br-tun

- Controller &#34;tcp:127.0.0.1:6633&#34;

- is_connected: true

- fail_mode: secure

- Port br-tun

- Interface br-tun

- type: internal

- Port patch-int

- Interface patch-int

- type: patch

- options: {peer=patch-tun}

- Bridge br-int

- Controller &#34;tcp:127.0.0.1:6633&#34;

- is_connected: true

- fail_mode: secure

- Port int-br-provider

- Interface int-br-provider

- type: patch

- options: {peer=phy-br-provider}

- Port br-int

- Interface br-int

- type: internal

- Port patch-tun

- Interface patch-tun

- type: patch

- options: {peer=patch-int}

- Bridge br-provider

- Controller &#34;tcp:127.0.0.1:6633&#34;

- is_connected: true

- fail_mode: secure

- Port phy-br-provider

- Interface phy-br-provider

- type: patch

- options: {peer=int-br-provider}

- Port &#34;ens224&#34;

- Interface &#34;ens224&#34;

- Port br-provider

- Interface br-provider

- type: internal

- ovs_version: &#34;2.10.1&#34;

- $ yum install openstack-neutron-openvswitch ipset -y

- # /etc/neutron/neutron.conf

- [DEFAULT]

- transport_url = rabbit://openstack:fanguiju@controller

- auth_strategy = keystone

- [keystone_authtoken]

- www_authenticate_uri = http://controller:5000

- auth_url = http://controller:5000

- memcached_servers = controller:11211

- auth_type = password

- project_domain_name = default

- user_domain_name = default

- project_name = service

- username = neutron

- password = fanguiju

- [oslo_concurrency]

- lock_path = /var/lib/neutron/tmp

- # /etc/neutron/plugins/ml2/openvswitch_agent.ini

- [ovs]

- local_ip = 10.0.0.2

- [agent]

- tunnel_types = vxlan

- l2_population = True

- # /etc/nova/nova.conf

- ...

- [neutron]

- url = http://controller:9696

- auth_url = http://controller:5000

- auth_type = password

- project_domain_name = default

- user_domain_name = default

- region_name = RegionOne

- project_name = service

- username = neutron

- password = fanguiju

- $ systemctl enable openvswitch

- $ systemctl start openvswitch

- $ systemctl status openvswitch

- $ systemctl restart openstack-nova-compute.service

- $ systemctl enable neutron-openvswitch-agent.service

- $ systemctl start neutron-openvswitch-agent.service

- $ systemctl status neutron-openvswitch-agent.service

- [root@compute ~]# ovs-vsctl show

- 80d8929a-9dc8-411c-8d20-8f1d0d6e2056

- Manager &#34;ptcp:6640:127.0.0.1&#34;

- is_connected: true

- Bridge br-tun

- Controller &#34;tcp:127.0.0.1:6633&#34;

- is_connected: true

- fail_mode: secure

- Port br-tun

- Interface br-tun

- type: internal

- Port patch-int

- Interface patch-int

- type: patch

- options: {peer=patch-tun}

- Bridge br-int

- Controller &#34;tcp:127.0.0.1:6633&#34;

- is_connected: true

- fail_mode: secure

- Port br-int

- Interface br-int

- type: internal

- Port patch-tun

- Interface patch-tun

- type: patch

- options: {peer=patch-int}

- ovs_version: &#34;2.10.1&#34;

- [root@controller ~]# openstack network agent list

- +--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

- | ID | Agent Type | Host | Availability Zone | Alive | State | Binary |

- +--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

- | 41925586-9119-4709-bc23-4668433bd413 | Metadata agent | controller | None | :-) | UP | neutron-metadata-agent |

- | 43281ac1-7699-4a81-a5b6-d4818f8cf8f9 | Open vSwitch agent | controller | None | :-) | UP | neutron-openvswitch-agent |

- | b815e569-c85d-4a37-84ea-7bdc5fe5653c | DHCP agent | controller | nova | :-) | UP | neutron-dhcp-agent |

- | d1ef7214-d26c-42c8-ba0b-2a1580a44446 | L3 agent | controller | nova | :-) | UP | neutron-l3-agent |

- | f55311fc-635c-4985-ae6b-162f3fa8f886 | Open vSwitch agent | compute | None | :-) | UP | neutron-openvswitch-agent |

- +--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

- $ yum install openstack-dashboard -y

- # /etc/openstack-dashboard/local_settings

- ...

- OPENSTACK_HOST = &#34;controller&#34;

- ...

- # Allow all hosts to access the dashboard

- ALLOWED_HOSTS = [&#39;*&#39;, ]

- ...

- # Configure the memcached session storage service

- SESSION_ENGINE = &#39;django.contrib.sessions.backends.cache&#39;

- CACHES = {

- &#39;default&#39;: {

- &#39;BACKEND&#39;: &#39;django.core.cache.backends.memcached.MemcachedCache&#39;,

- &#39;LOCATION&#39;: &#39;controller:11211&#39;,

- }

- }

- ...

- # Enable the Identity API version 3

- OPENSTACK_KEYSTONE_URL = &#34;http://%s:5000/v3&#34; % OPENSTACK_HOST

- ...

- # Enable support for domains

- OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

- ...

- # Configure API versions

- OPENSTACK_API_VERSIONS = {

- &#34;identity&#34;: 3,

- &#34;image&#34;: 2,

- &#34;volume&#34;: 2,

- }

- ...

- OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = &#39;Default&#39;

- ...

- OPENSTACK_KEYSTONE_DEFAULT_ROLE = &#34;user&#34;

- ...

- OPENSTACK_NEUTRON_NETWORK = {

- &#39;enable_router&#39;: True,

- &#39;enable_quotas&#39;: True,

- &#39;enable_ipv6&#39;: True,

- &#39;enable_distributed_router&#39;: False,

- &#39;enable_lb&#39;: False,

- &#39;enable_firewall&#39;: False,

- &#39;enable_vpn&#39;: False,

- &#39;enable_ha_router&#39;: False,

- &#39;enable_fip_topology_check&#39;: True,

- &#39;supported_vnic_types&#39;: [&#39;*&#39;],

- &#39;physical_networks&#39;: [],

- }

- # /etc/httpd/conf.d/openstack-dashboard.conf

- WSGIDaemonProcess dashboard

- WSGIProcessGroup dashboard

- WSGISocketPrefix run/wsgi

- WSGIApplicationGroup %{GLOBAL}

- WSGIScriptAlias /dashboard /usr/share/openstack-dashboard/openstack_dashboard/wsgi/django.wsgi

- Alias /dashboard/static /usr/share/openstack-dashboard/static

- <Directory /usr/share/openstack-dashboard/openstack_dashboard/wsgi>

- Options All

- AllowOverride All

- Require all granted

- </Directory>

- <Directory /usr/share/openstack-dashboard/static>

- Options All

- AllowOverride All

- Require all granted

- </Directory>

- $ systemctl restart httpd.service memcached.service

- $ systemctl status httpd.service memcached.service

- Verify:访问 http://controller/dashboard 登录页面。

Cinder(Controller)

- $ yum install lvm2 device-mapper-persistent-data -y

- $ cat /etc/lvm/lvm.conf

- devices {

- ...

- filter = [ &#34;a/sdb/&#34;, &#34;r/.*/&#34;]

- $ systemctl enable lvm2-lvmetad.service

- $ systemctl start lvm2-lvmetad.service

- $ systemctl status lvm2-lvmetad.service

- $ pvcreate /dev/sdb

- $ vgcreate cinder-volumes /dev/sdb

- $ openstack service create --name cinderv2 --description &#34;OpenStack Block Storage&#34; volumev2

- $ openstack service create --name cinderv3 --description &#34;OpenStack Block Storage&#34; volumev3

- $ openstack user create --domain default --password-prompt cinder

- $ openstack role add --project service --user cinder admin

- $ openstack endpoint create --region RegionOne volumev2 public http://controller:8776/v2/%\(project_id\)s

- $ openstack endpoint create --region RegionOne volumev2 internal http://controller:8776/v2/%\(project_id\)s

- $ openstack endpoint create --region RegionOne volumev2 admin http://controller:8776/v2/%\(project_id\)s

- $ openstack endpoint create --region RegionOne volumev3 public http://controller:8776/v3/%\(project_id\)s

- $ openstack endpoint create --region RegionOne volumev3 internal http://controller:8776/v3/%\(project_id\)s

- $ openstack endpoint create --region RegionOne volumev3 admin http://controller:8776/v3/%\(project_id\)s

- [root@controller ~]# openstack catalog list

- +-----------+-----------+------------------------------------------------------------------------+

- | Name | Type | Endpoints |

- +-----------+-----------+------------------------------------------------------------------------+

- | nova | compute | RegionOne |

- | | | internal: http://controller:8774/v2.1 |

- | | | RegionOne |

- | | | admin: http://controller:8774/v2.1 |

- | | | RegionOne |

- | | | public: http://controller:8774/v2.1 |

- | | | |

- | cinderv2 | volumev2 | RegionOne |

- | | | admin: http://controller:8776/v2/a2b55e37121042a1862275a9bc9b0223 |

- | | | RegionOne |

- | | | public: http://controller:8776/v2/a2b55e37121042a1862275a9bc9b0223 |

- | | | RegionOne |

- | | | internal: http://controller:8776/v2/a2b55e37121042a1862275a9bc9b0223 |

- | | | |

- | neutron | network | RegionOne |

- | | | internal: http://controller:9696 |

- | | | RegionOne |

- | | | admin: http://controller:9696 |

- | | | RegionOne |

- | | | public: http://controller:9696 |

- | | | |

- | glance | image | RegionOne |

- | | | admin: http://controller:9292 |

- | | | RegionOne |

- | | | public: http://controller:9292 |

- | | | RegionOne |

- | | | internal: http://controller:9292 |

- | | | |

- | keystone | identity | RegionOne |

- | | | admin: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | public: http://controller:5000/v3/ |

- | | | RegionOne |

- | | | internal: http://controller:5000/v3/ |

- | | | |

- | placement | placement | RegionOne |

- | | | internal: http://controller:8778 |

- | | | RegionOne |

- | | | public: http://controller:8778 |

- | | | RegionOne |

- | | | admin: http://controller:8778 |

- | | | |

- | cinderv3 | volumev3 | RegionOne |

- | | | internal: http://controller:8776/v3/a2b55e37121042a1862275a9bc9b0223 |

- | | | RegionOne |

- | | | admin: http://controller:8776/v3/a2b55e37121042a1862275a9bc9b0223 |

- | | | RegionOne |

- | | | public: http://controller:8776/v3/a2b55e37121042a1862275a9bc9b0223 |

- | | | |

- +-----------+-----------+------------------------------------------------------------------------+

- $ yum install openstack-cinder targetcli python-keystone -y

- # /etc/cinder/cinder.conf

- [DEFAULT]

- my_ip = 172.18.22.231

- enabled_backends = lvm

- auth_strategy = keystone

- transport_url = rabbit://openstack:fanguiju@controller

- glance_api_servers = http://controller:9292

- [database]

- connection = mysql+pymysql://cinder:fanguiju@controller/cinder

- [keystone_authtoken]

- auth_uri = http://controller:5000

- auth_url = http://controller:5000

- memcached_servers = controller:11211

- auth_type = password

- project_domain_id = default

- user_domain_id = default

- project_name = service

- username = cinder

- password = fanguiju

- [oslo_concurrency]

- lock_path = /var/lib/cinder/tmp

- [lvm]

- volume_driver = cinder.volume.drivers.lvm.LVMVolumeDriver

- volume_group = cinder-volumes

- iscsi_protocol = iscsi

- iscsi_helper = lioadm

- # /etc/nova/nova.conf

- [cinder]

- os_region_name = RegionOne

- $ CREATE DATABASE cinder;

- $ GRANT ALL PRIVILEGES ON cinder.* TO &#39;cinder&#39;@&#39;localhost&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ GRANT ALL PRIVILEGES ON cinder.* TO &#39;cinder&#39;@&#39;%&#39; IDENTIFIED BY &#39;fanguiju&#39;;

- $ su -s /bin/sh -c &#34;cinder-manage db sync&#34; cinder

- $ systemctl restart openstack-nova-api.service

- $ systemctl enable openstack-cinder-api.service openstack-cinder-scheduler.service

- $ systemctl start openstack-cinder-api.service openstack-cinder-scheduler.service

- $ systemctl status openstack-cinder-api.service openstack-cinder-scheduler.service

- $ systemctl enable openstack-cinder-volume.service target.service

- $ systemctl start openstack-cinder-volume.service target.service

- $ systemctl status openstack-cinder-volume.service target.service

- [root@controller ~]# openstack volume service list

- +------------------+----------------+------+---------+-------+----------------------------+

- | Binary | Host | Zone | Status | State | Updated At |

- +------------------+----------------+------+---------+-------+----------------------------+

- | cinder-scheduler | controller | nova | enabled | up | 2019-04-25T09:26:49.000000 |

- | cinder-volume | controller@lvm | nova | enabled | up | 2019-04-25T09:26:49.000000 |

- +------------------+----------------+------+---------+-------+----------------------------+

- END -

关于 “云物互联” 微信公众号:

欢迎关注 “云物互联” 微信公众号,我们专注于云计算、云原生、SDN/NFV、边缘计算及 5G 网络技术的发展及应用。热爱开源,拥抱开源!

技术即沟通

化云为雨,落地成林 |